Applying analytic technology at the point of video surveillance capture offers a range of strategic and tactical benefits. Emerging smarter camera solutions built on this premise have the potential to save both time and money for those who implement them. Furthermore, an advanced military surveillance solution architected around this methodology provides a futuristic framework

for overcoming challenging issues and maximizing technology to aid situational awareness on the battlefield.

Balancing advancements in technology with efficiency can present daunting challenges that may require agencies to revamp traditional methods for fulfilling a mission. The Department of Defense and several other federal agencies face this dilemma across a range of technology breakthroughs. Although new products present superb opportunities to better protect our troops, advance national intelligence and strengthen our comprehensive security posture, advancing parallel operational policies is a significant hurdle to fully realize the benefits of this modern day innovation.

This article addresses the specific example of how communications network bandwidth is currently a significant bottleneck for camera systems collecting video information. It also describes how edge analytics can greatly reduce the data burden on already overloaded communications networks.

One of the largest obstacles for the full adoption of enhanced sensor technologies has been the limited bandwidth of legacy military communications networks. While these networks have evolved and improved over time, the bandwidth demand has greatly outpaced the supply. The high growth rate of bandwidth demand is driven by two main phenomena:

- High proliferation of connected devices (such as voice/data handheld radios, sensors, etc.)

- Increasing data volume produced by each device or sensor suite (For example, a Global Hawk Unmanned Aerial Vehicle currently requires five times the bandwidth of the entire U.S. military during Operation Desert Storm.) [1]

Figure 1: Illustration of Metcalfe’s Law. [2] (Released)

Metcalfe’s Law

“Metcalfe’s law states that the value of a network grows as the square of the number of its users: V ~ N2.” [2] The concept of Metcalfe’s Law is shown in Figure 1. In this illustration, each user is represented by a telephone. As the number of users in the network increases, the potential connections between users increases by approximately the square of the number of users. As the figure shows, two users can have a single connection, five users can have 10 connections, and 12 users can have 66connections. The more connections the network has, the higher the potential value of

the network. However, the limiting factor for the value of the network quickly becomes the available bandwidth of the network.

A military corollary to Metcalfe’s Law is the Iron Law of Bandwidth Usage, which states that, “for military formations, using bandwidth expands to consume whatever amount of bandwidth is provided.” [3] This effect makes sense because DoD missions must maximize the value of the network. Therefore, the maximum number of connections must be made, enabling the maximum

amount of data flow, and once again causing the network to be the limiting component of the entire system.

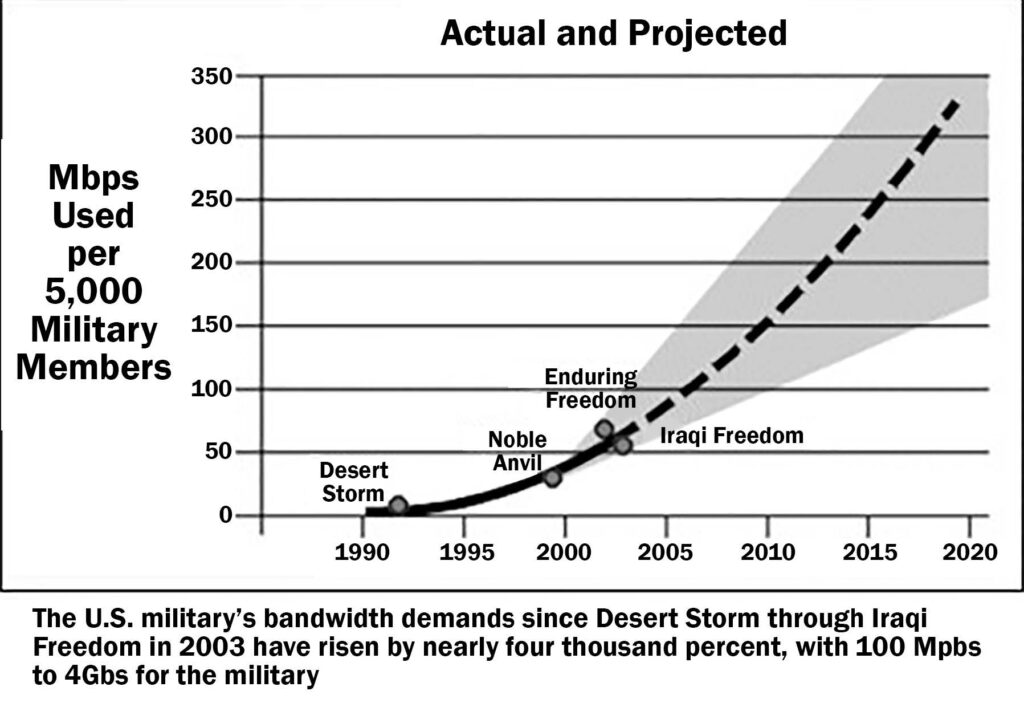

Figure 2 shows the increase in bandwidth used by the military from Operation Desert Storm in 1990 through Operation Iraqi Freedom in 2003, with a projection of bandwidth requirement into present day and the future. [1]

Figure 2: Growth in SATCOM needed to support 5,000 military members. [10] (Image courtesy of the U.S. Army/Released)

WIN-T Increment 2

In 2013, the U.S. Army began deploying the second tier of a new high-speed, high-capacity tactical communications network, Warfighter Information Network – Tactical Increment 2. [4] The main network enhancement provided by WIN-T Increment 2 is networking on-the-move, which by definition requires multi-node hops across an ad hoc communications network. With the addition of multiple hops between nodes, latency in the network increases creating a reduction in available bandwidth between the nodes.

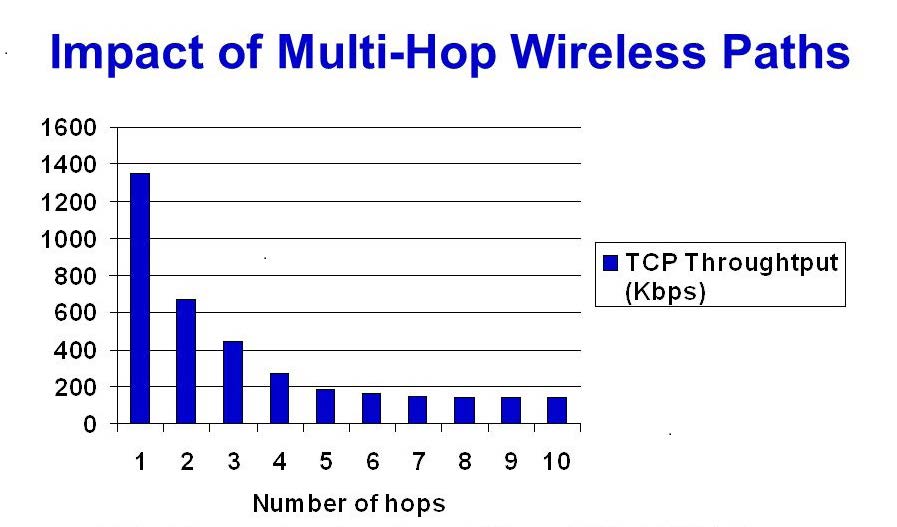

Figure 3 shows the effect of number of hops on the bandwidth of a wireless network. This figure shows the throughput of a 2 Mbps 802.11 (Wi-Fi) medium access control layer. [5] As can be seen in the figure, the throughput of the network is greatly reduced over the first few hops and eventually stabilizes at a value less than 10 percent of the maximum bandwidth of the system.

In summary, there is, and will likely always be, a shortage of bandwidth within military operations. Trade-offs to best support the mission are in constant competition. Understanding this now, let’s inverse our logic to think specifically about sensors.

Today’s high-definition camera technology enables the user to focus on objects from far away distances with impressive precision. Analytics such as motion, face and person detection and recognition can analyze this data quickly to attain actionable intelligence. However, egressing the video to a back-end system can be a major challenge.

These high-resolution cameras are bandwidth voracious, and the DoD, especially in theatre, evaluates trade-offs to maximize transmission efficiency. It is common practice for video to be reduced in resolution and frame rate and compressed to support efficiency, but this has negative impacts on video analytics, especially biometric exploitation.

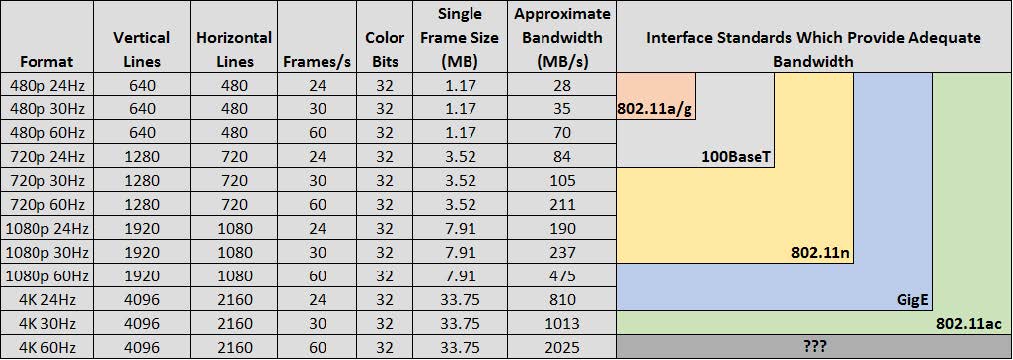

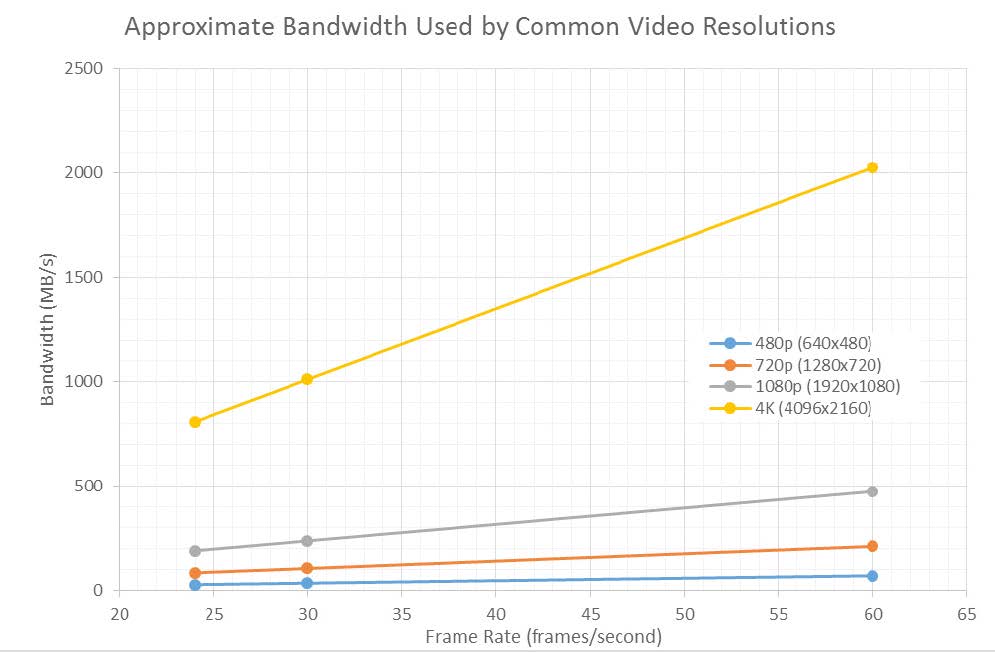

In order to make the data requirements of mainstream video technologies clearer, Figure 4 shows the approximate bandwidth used for common video formats and resolutions. It is easily observed from the chart that as 4K video begins to be used more in the field, the bandwidth requirements of the network will dramatically increase.

Table 1 shows approximate bandwidth requirements for various video formats and examples of interface standards that can accommodate those video streams.

Current State

Today, video is generally either streamed from the battlefield to a remote location for viewing or stored at the device for subsequent retrieval. Connectivity and bandwidth are drivers for determining if, and at what resolution and frame rates, the streaming of video may occur. The quality of the video impacts how well video analytics can be leveraged.

Video analytics have become a valuable tool for quickly sifting through video content to find specific events of interest. For example, specialized algorithms can save soldier man-hours by quickly analyzing if motion, people, faces, vehicles or other objects appear in a video. When successfully deployed in optimal environmental conditions, video analytics can enable an operator to find actionable intelligence within seconds, or minutes, whereas previous brute force methods could take hours or days.

Algorithms may not be capable of detecting all events. However, video analytics are especially powerful when dealing with large volumes of data too overwhelming for human processing. Video analytics guide analysts to the appropriate data sources to find the proverbial needle in the haystack.

Nonetheless, the benefits of video analytics are limited to the usability of the data. HD camera optics have matured to enable impressive results with analytics. Unfortunately, if major compromises for bandwidth reduction include poor resolution or lower frame rates, the overall video quality is not likely to support a successful implementation of analytics, especially biometric exploitation of identities.

Emerging video formats and enhanced camera capabilities are enabling much greater information capture. These technologies are driven by the commercial market and demand for higher definition products. By the year 2020, the overall market for 4K video technology is expected to reach $102.1 billion. [6] Likewise, the market for video analytics is estimated to reach $4.23

billion by 2021, with the adoption of cloud based technologies significantly increasing the demand for video analytics software and services. [7]

The sharpness in pixel density and overall integrity of video provided by 4K cameras is ideal for successful application of analytics. Motion detection, person detection, face detection, color filtering, gender estimation, age estimation, ethnicity estimation, license plate recognition and face recognition are all examples of mature video analytics that run exceptionally well on high quality video. However, 4K cameras also produce extremely large data streams.

The 4K video is more than four times the size of 1080 HD video as shown in Figure 4. Given many military and government infrastructures can hardly handle 1080 HD video streams today, this advancement in camera technology presents substantial bandwidth challenges. These network limitations are not easy to overcome.

Applying analytics at the point of capture is the most sensible way to take advantage of HD and 4K cameras. The smart-edge video analytic concept deploys a surveillance infrastructure that utilizes advancements in camera optics capable of producing tailored real-time alert notifications.

Table 1: Required bandwidth for various video formats and interface standards to support them. (Released)

Future State

Is there a silver bullet solution for successfully deploying video analytics in this bandwidth constrained environment? Can the full capabilities of HD camera optics be appropriately exploited with this innovative technology? Yes – by migrating the analytics to what is referred to as ‘the edge.’

Processing video locally, at the sensor, provides multiple benefits. First, information extraction is performed on the full-resolution, high-fidelity imagery before the data is compressed, resulting in better actionable information. Second, information can be quickly processed locally because the transmission steps and related latency issues are removed from the process. [8]

Imagine an adaptive surveillance solution that allows an intelligent deployment of analytics to process video at the point of capture. This software-based solution pulls video from a camera and invokes a suite of analytics that run locally on a miniature processor to support a range of transmission options. The edge solution is capable of automatically executing business rules defined by the operator to intelligently maximize bandwidth efficiency based upon motion, face, person detection and watch-listing. Additionally, modern biometric algorithms can also support multiple watchlists to be loaded and managed at the edge. This allows the solution to instantaneously compare streaming video with the watchlist photos, subsequently triggering near real-time alerts to a command post or a mobile device.

The overall system infrastructure supports remote management with ground-breaking situational awareness to quickly attain actionable intelligence from video. A variety of enclosure options can be supported with such a solution to include both overt and covert surveillance scenarios. Common smart city cameras can be equipped with small processors to support the overall edge analytic

solution as well as more complex implementations that attach a cluster of cameras to a more powerful processing unit. Regardless of the configuration, the edge analytics solution can maintain a forensic recording of video on fully encrypted, detachable drives to support evidentiary testimony.

Figure 3: Impact of multi-hop wireless paths. [11] (Released)

Extracting additional content from video, fusing analytic information and better understanding events are all growing areas of research by academia, government and the commercial sector. After the Boston Marathon Bombing, the FBI convened a workshop with both the National Science Foundation and the Defense Advanced Research Projects Agency to better understand “the state of the art in algorithms being developed in academia that can support forensic analysis and identification in large volumes of images and videos.” [9] Collectively, these agencies explicitly assessed

what is considered the solved, nearly solved and over-the-horizon challenges within image and video analysis. [9] Based on the market opportunity for video, we can expect substantial growth in research to occur within the commercial sector. This increased interest in algorithm development for the purpose of video analysis opens the door for research into video feed security protocols

and procedures.

Summary

Watching today’s news broadcasts, it’s apparent that video is increasingly associated with tragic events across the globe. Terrorist and foreign fighter groups routinely capture their propaganda and heinous acts of crime on camera. Similarly, other bad actors, such as cartels, leverage video to exploit torturous messages to their rivals, broadcast announcements and facilitate recruitment.

This data often gets published on social media and shared across the world. As such, these groups frequently give us a clue to their identity that we can harness for ongoing protection of our troops and citizens – a picture of their face.

Figure 4: Bandwidth used by common video formats and resolutions. (Released)

Camera technology is rapidly advancing. HD cameras are becoming more affordable, and they capture data with higher integrity. Faces sourced from these high quality recordings are ‘usable’ for analytics such as face recognition. As such, the video we capture within our surveillance systems must be equally viable.

However, if we depend on backend systems to exploit the video with analytics, it is inevitable we will need to reduce resolution and frame rates due to bandwidth constraints. In this situation, analytics such as face recognition will be much less effective.

It is imperative that more intelligent camera solutions be deployed that can process analytics on the original high-integrity video and leverage the power of this automation to support more actionable intelligence. A smart edge-based camera ecosystem is the future of video surveillance. This ecosystem will allow tactical business rules to be programmed into cameras to determine what information is sent and when.

Why waste bandwidth when there is no motion? If the goal is to only alert the command center when people are present, why not only send video when a person detection algorithm identifies that someone is walking in the surveilled area? If looking for specific terrorists or criminals, how valuable would it be to have access to 30 seconds of video both before and after a specific event, such as the suspect walking by a surveillance point? How valuable would it be to have video content automatically sent to an intelligence operator upon immediate event detection instead of an intelligence operator reviewing hours of video to determine events?

All of these concepts are possible today with smart-camera edge analytics. The systems can be programmed to send video data in appropriate resolutions and framerates for any given network that is deployed. The capabilities of technology will continue to progress, and with the evolution of enabling policies, military surveillance will undoubtedly become more agile and advanced as

these intelligent solutions are deployed.

References

1. Furstenberg, D. (2012, March). Intel: Meeting the Growing Bandwidth Demands of a Modern Military. MilsatMagazine. Retrieved from http://www.milsatmagazine.com/story. php?number=855426811 (accessed January 12, 2017).

2. Metcalfe, R. (2013, December). Metcalfe’s Law after 40 Years of Ethernet. Computer, 46(12), 26-31.

3. Gourè, D. (2013, June 4). The Military and the Iron Law of Bandwidth Usage. Retrieved from http://lexingtoninstitute.org/the-militaryand- the-iron-law-of-bandwidth-usage/ (accessed

January 12, 2017).

4. Office of the Director of Operational Test and Evaluation. (2016, January). 2015 annual report of the Director of Operational Test and Evaluation. Retrieved from http:// www.dote.osd.mil/pub/reports/FY2015/pdf/other/2015DOTEAnnualReport.pdf (accessed January 12, 2017).

5. Leland, J., & Porche, I. (2004). Future Army Bandwidth Needs and Capabilities. Retrieved from http://www.rand.org/content/ dam/rand/pubs/monographs/2004/RAND_ MG156.pdf (accessed January 12, 2017).

6. Jukic, S. (2015, August 4). The market for 4K technology will explode to more than $100 billion by 2020. Retrieved from http://4k. com/news/4k-technology-market-expect-toexplode-

to-100-billion-by-2020-8668/ (accessed January 12, 2017).

7. Markets and Markets. (2016, June). Video Analytics Market by Type (Solutions & Services), by Applications (Perimeter Intrusion Detection, Pattern Recognition, Counting & Crowd Management, ALPR, Incident Detection and Others), by Deployment Type, by Vertical, by Region – Global Forecast to 2021. Retrieved from http://www. marketsandmarkets.com/Market-Reports/intelligent-video-analytics-market-778.html (accessed January 12, 2017).

8. Jobling, C. (2013, July 31). Capturing, processing, and transmitting video: Opportunities and challenges. Avionics Design Newsletter. Retrieved from http:// mil embedded.com/articles/capturing-processing-transmitting-video-opportunities-challenges/ (accessed January 12, 2017).

9. Chellappa, R. (2014). Frontiers in Image and Video Analysis. NSF/FBI/DARPA Workshop Report. Retrieved from http://www. umiacs.umd.edu/~rama/NSF_report.pdf (accessed January 12, 2017).

10. Rayermann, P. (2003). Exploiting Commercial SATCOM: A Better Way. Parameters, 33(4), 54-66. Retrieved from http://strategicstudiesinstitute.army.mil/pubs/parameters/

articles/03winter/rayerman.pdf (accessed January 12, 2017).

11. Holland, G., & Vaidya, N. (1999). Proceedings from ACM/IEEE International Conference on Mobile Computing and Networking ’99: Analysis of TCP Performance over Mobile Ad Hoc Networks. (pp. 219-230). New York, NY: ACM.