Biometric technologies offer major advantages over conventional, legacy identification and authentication methods. In particular, they are more secure, accurate, reliable, and user-friendly. In fact, to provide proof of identification or authenticate devices, users have seamless and straightforward access to a measure, namely their biometric traits (e.g., fingerprints, voice, face). As a result, biometrics technologies have become ubiquitous in both civilian and military applications. For example, the U.S. Department of Defense (DoD) is investigating means of integrating biometric verification systems into its Common Access Card (CAC) system [1]. Another potential near-term application is biometric verification for operators of military unmanned aerial vehicle systems.

While convenient, biometric traits alone cannot serve as confidential identifiers since they can be inadvertently disclosed, and when stolen or captured, it is challenging to replace or modify them [2]. Then, to what extent can we rely on the security of such biometrics technologies? Unfortunately, there is overwhelming evidence that current systems are ill-prepared from a security perspective.

More precisely, traditional biometric systems are vulnerable to various physical attacks, which could result in the theft of biometric templates, illegal system access, denial of service, etc. [3]. This holds especially true for systems operating in hostile environments, where resistance to such attacks is paramount, yet difficult to provide at a low cost. To provide such resistance to various attacks like those discussed above, we introduce a security framework called BLOcKeR—Biometric Locking by Obfuscation, Physically Unclonable Keys, and Reconfigurability.

Adversarial Model

In general, the adversary has one or more of the following goals: template theft/recovery, denial of service, and/or unauthorized card/device access. Considering the cost and accessibility of the device under attack, such attacks can be executed in three ways: non-invasive, semi-invasive, and invasive. Non-invasive attacks [4, 5], such as side-channel attacks, require the lowest cost and no physical tampering. However, in some cases, physical access to the device is required.

On the other hand, invasive attacks [6] require the highest cost and more intrusive physical tampering (e.g., circuit editing and microprobing). Compared to invasive attacks, semi-invasive attacks [7, 8] require moderate cost and some physical tampering (e.g., partial decapsulation and backside thinning). Although the equipment required for semi-invasive and invasive attacks might seem costly (> $1 million), it is worth noting that cheap alternatives are emerging. For instance, such machines can be rented for less than $200 per hour in any failure analysis laboratory, or acquired second-hand for $100,000 to $200,000 [9]. Thus, semi-invasive and invasive attacks are becoming practical enough that they are no longer exclusive to nation-states.

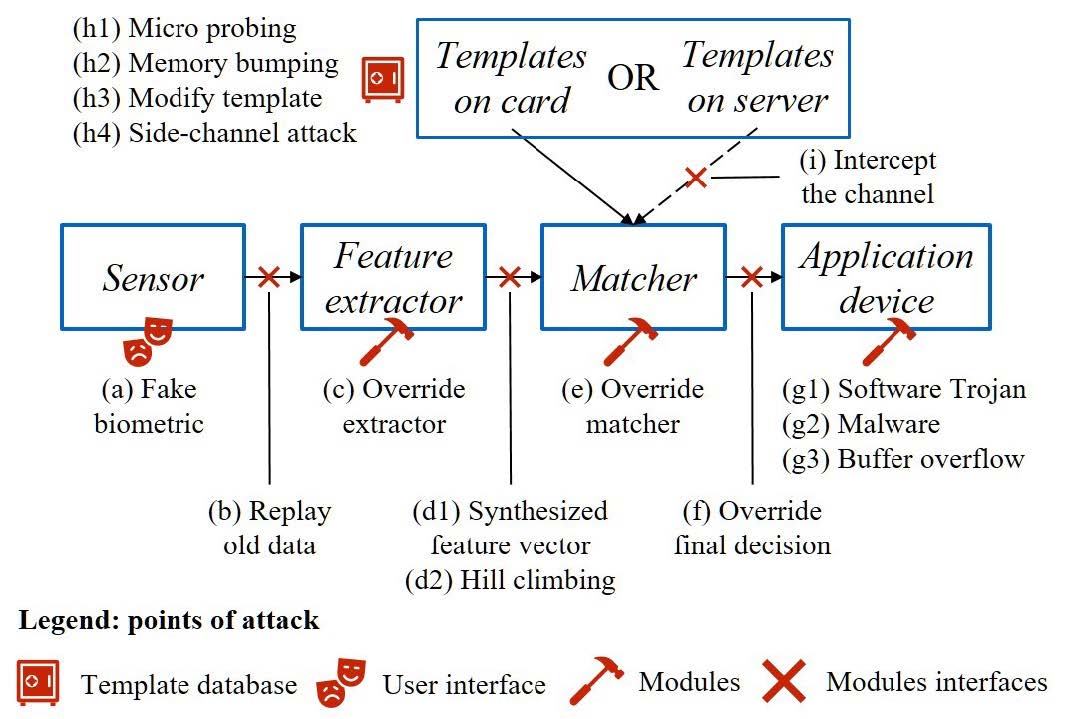

From another angle, the attacks can be categorized based on the points of attacks in a biometrics system (e.g., template storage, user interface, modules, and modules interfaces), as shown in Figure 1. In this figure, the sensor is the device used to collect the raw biometric as input. The application device refers to the software or hardware accepting the decision from the matcher. As in a realistic scenario, we assume all the modules and the interfaces between them may be accessed by the attacker (e.g., through invasive attacks). As prime examples of attack points depicted in Figure 1, one can notice where the template theft/modification attacks can happen—namely the attacks (h1-h4) and (i). While attacks launched on either the matcher module or its interfaces are also prominent and can be significantly effective, we further focus on these attacks as follows:

Feature Extractor Output Attacks

The interface between the feature extractor and matcher can be subject to the hill-climbing attack (d2) that iteratively submits synthetic representations of the user’s biometric (d1) until a successful recognition is achieved [10]. At each step, the synthetic data is updated according to the matching score of previous attempts to increase the rate of false accept.

Override Matcher

This attack can generate the high matching score to bypass the biometric authentication system regardless of the values obtained from the input feature set [11]

Override the Output of the Matcher

Similar to overriding of the matcher module, the attacker can simply override the result declared by the matcher module. The module interface attacks mentioned above aim to gain unauthorized access to the system.

Figure 1. Points of attacks: vulnerabilities in a traditional biometric system [26]

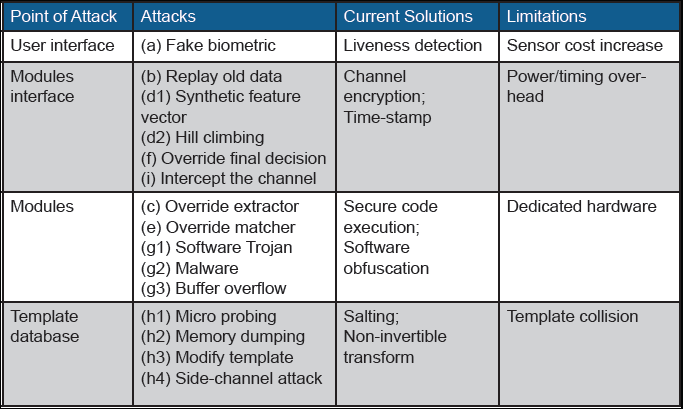

Table 1. Vulnerabilities, current solutions, and their limitation for a traditional biometric system

Existing Countermeasures

Table 1 summarizes the vulnerabilities in a traditional biometric system and their corresponding, available countermeasures, as well as limitations of them. As can be understood from this table, available countermeasures for protecting biometric templates are extremely limited [12]. For instance, in the most common instantiation (match-on server), the templates exist on a centralized server in a raw, encrypted, salted, or non-invertible transformed form where they are susceptible to theft. As a prime example, in 2015 the U.S. Office of Personnel Management reported that 5.1 million fingerprints were stolen from their database [13]. In the alternative instantiation (match-on card), biometric templates are kept locally on the card/ device where physical attacks (e.g., microprobing [14], side channel attacks [15]) can be used to extract the template and/or encryption keys from the card/device.

Match-on card/devices are also vulnerable to attacks on software and hardware implementations that bypass/override matching schemes (template modification, code reuse, buffer overflow, cold boot, hill-climbing, and so on). Multiple modules of the biometric system and the interfaces between them may also be compromised. For instance, a 2014 HP Development Company, L.P. study found that 70 percent of IoT devices did not use encryption when transmitting sensitive data across the LAN and internet [16].

BLOcKeR: A Biometric Locking Paradigm

BLOcKeR combines biometrics and configurability with two recent advances in hardware security: physically unclonable functions (PUFs) and hardware obfuscation. A PUF can be described as an object’s fingerprint. Like a biometric, which expresses a human’s identity, a PUF is an expression of an inherent and unclonable instance-specific feature of a physical object [17]. For integrated circuits (ICs), these instance-specific features are induced by manufacturing process variations and can be captured by the input/output behavior of the PUF so-called challenge/ response (CRP) behavior that is a physical mapping. BLOcKeR leverages the inherent, instance-specific properties of a PUF to tie the biometrics of a device to its owner.

Configurability in hardware refers to the ability to customize logic gates of an IC and their connections in the field. For field-programmable gate arrays (FPGAs), this customization is referred to as a bitstream, and it is typically stored in an internal or external non-volatile memory. Hardware obfuscation encapsulates a series of techniques which lock a chip or system by blocking its normal function at the hardware level until a correct “key” is applied [18]. Without a correct key, the device is locked. In a typical instantiation, only the designer or some other authorized party can compute the key that unlocks the device.

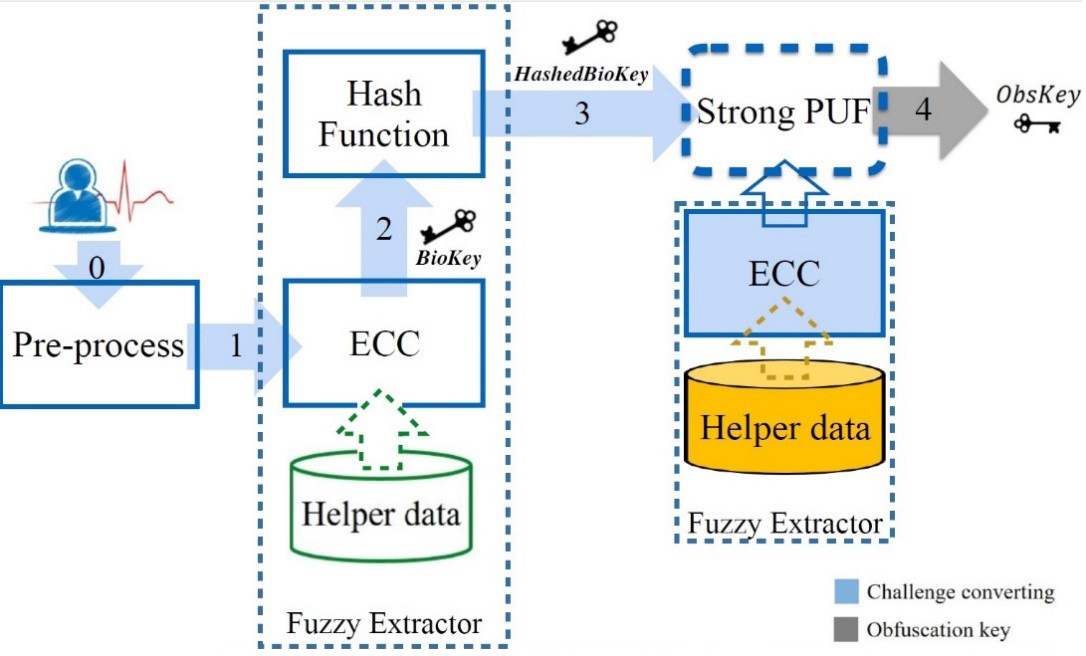

Figure 2. How BLOcKeR generates keys from biometrics

Clearly, this can help to protect against various access control circumvention attacks. Before elaborating on this, we should take a closer look at how BLOcKeR works. As shown in Figure 2, BLOcKeR is composed of modules orchestrated carefully to achieve a high degree of robustness against attacks. These modules and their functionalities can be described as follows:

Module 1 – Pre-processing

This module accounts for pre-processing with the goal of removing the noise and extracting the features from the biometrics

Module 2 – Fuzzy Extraction

The pre-processing is followed by fuzzy extraction [11]. The primary purpose of this module is to generate reproducible binary BioKeys and transform them to uniformly-distributed ones, called HashedBioKeys. This module plays an important role in any biometric technology. Without it, it is impossible to fulfill the requirements for a key—namely, being reproducible from the repeatedly measured biometrics data and being uniform.

Module 3 – Post-processing

This step is related to one of the core ideas of the BLOcKeR: tying the biometrics of a device to its owner. As can be seen in Figure 2, in this step a fuzzy extractor should be used along with the PUF to address the problem that originates in the noisy nature of PUFs. In this regard, by implementing an additional post-processing add-on (e.g., a fuzzy extractor), the issue can be resolved.

The output of this module is called ObsKey. As the name implies, only this key can be used to unlock the obfuscated bitstream; otherwise, the device is not functional (i.e., in a locked mode).

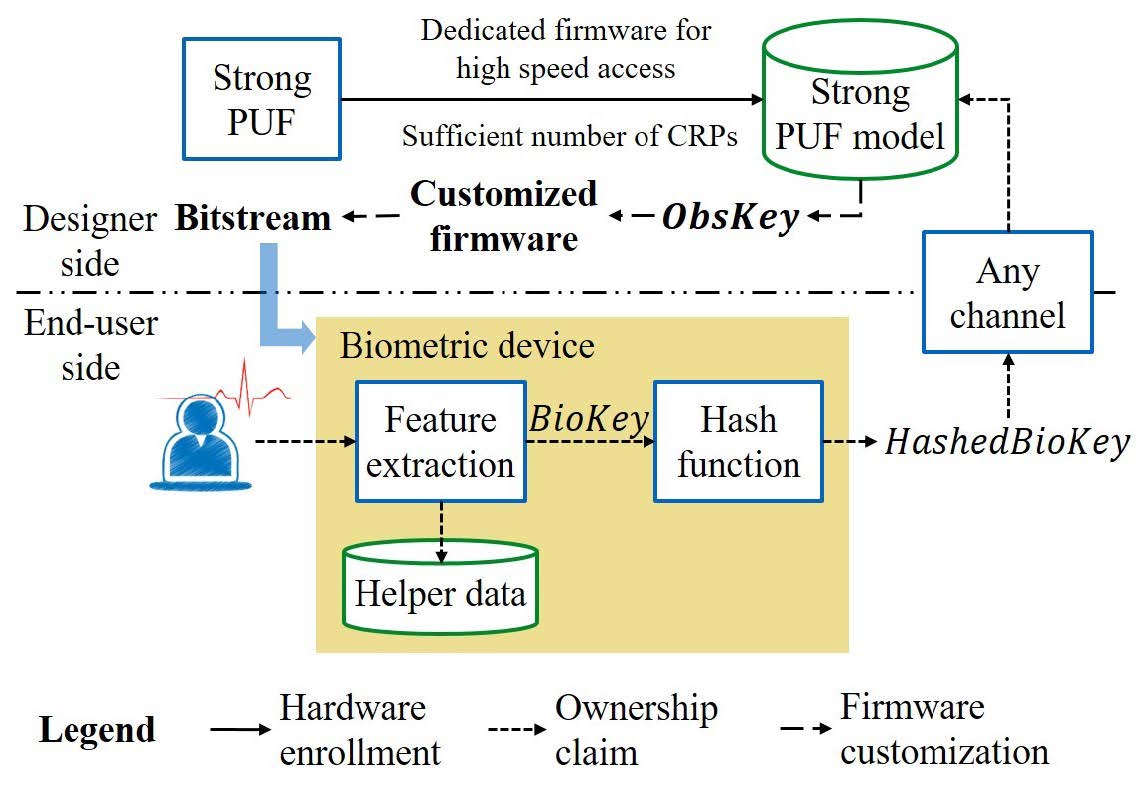

BLOcKeR for Authentication and Enrollment

The flow that we have already described is exactly what happens in a biometric authentication scenario. Note that the helper data used in the authentication flow should be generated during the enrollment flow described below. As depicted in Figure 3, the enrollment flow consists of three phases: hardware enrollment, ownership claim, and firmware customization.

Hardware Enrollment Phase

Before sending the device to the market/ field, the designer builds a mathematical PUF model for each device using a dedicated firmware and stores the models in a secure database. This mathematical model can be built by, for instance, applying a machine learning algorithm—as proposed in the PUF-related literature [19]. To this end, the firmware enables the designer to efficiently collect a sufficient number of CRPs. To ensure the confidentiality and integrity of measurements, this should be performed in a trusted environment. After enrolling the PUF model, access to the PUF is disabled (ideally in hardware). At this point, the device will be obtained by the user, potentially through an insecure supply chain. Since neither the user nor attacker could have direct access to the PUF CRPs after this point, the prediction model is only accessible to the designer.

Ownership Claim Phase

By presenting the legitimate user’s biometric signal to the device and operating that, the ownership can be claimed. Note that this step only occurs once, although the hardware platform, on which we implement BLOcKeR, could be configured several times. HashedBioKey obtained in this step (see Figures 2 and 3) may be transmitted to the designer through any public channels without dedicated protections. This is due to the non-invertible property of the hash function, where the raw biometric key (BioKey) cannot be induced by its hash value. Note that in BLOcKeR, the raw biometric template is never stored on the device and never sent to the designer/vendor.

Firmware Customization Phase

The challenge received by the designer is fed into the PUF model (see the description of the Hardware Enrollment Phase) to compute a unique device and biometric dependent response (ObsKey, see Figures 2 and 3). By exploiting this obfuscation key, an obfuscated bitstream is produced that is sent to the user and loaded into the device. Note that since the physical device with the PUF is no longer accessible to the designer/vendor, this step is only possible with the previously generated PUF model in the vendor’s possession. While this bitstream is generated by the device’s PUF and user’s biometric, it is unique and will only work with the enrolled device and user.

Figure 3. The BLOcKeR enrollment flow consists of the hardware enrollment, ownership claim, and firmware customization. In this figure, the three major phases are marked by different lines: the hardware enrollment in solid lines, the ownership claim in short dashed lines, and the firmware customization in long dashed lines. To avoid confusion, we do not show the fuzzy extractor applied to the PUF.

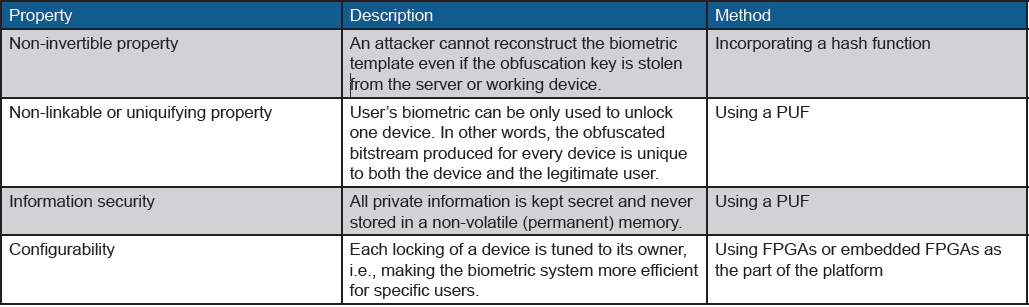

Security-related Properties of BLOcKeR

Table 2 presents notable features of BLOcKeR and the methods through which the respective feature can be made available. BLOcKeR addresses the issues summarized in Table 1 without imposing any significant limitation—unlike current solutions.

For instance, in addition to all useful properties that BLOcKeR exhibits, we stress that it avoids communication and storage of any form of the biometric template (raw, salted, encrypted, or quantized) at server and device/card. Thus eliminating all the attacks which target a stored template (whether in raw, encrypted, or salted form). In Figure 1, the attacks (h1-h4) and (i) cannot be applied due to the absence of the attack target.

BLOcKeR overcomes attacks on matching schemes, since no such scheme is implemented in our system. Instead, BLOcKeR uses a fuzzy extractor that is often paired with a PUF to address the issue with the noisy measurements. Hence, the attack (f) depicted in Figure 1 is prohibited due to the absence of the matcher module in BLOcKeR.

Nevertheless, the attacker may still attempt to apply the hill climbing attack by injecting the synthesized feature vector. This attack improves the key in a bit-wise manner by observing the behavior of the system. All the key bits should be examined before the termination of the attack. However, the hardware obfuscation in BLOcKeR thwarts this attack by magnifying any single-bit error. For instance, a single-bit difference in an FPGA LUT may alternate a NAND gate to an XOR gate. This alteration may randomly change the system behavior, and this randomization will not benefit the attacker in obtaining the correct key.

One sample application could be for the operation of a military drone, whereas the warfighter operating the drone is the user, the manufacturer is a government lab or defense contractor, and the research lab or other trusted party is the distributor who enrolls the warfighter and activates the drone. Besides the advantages discussed above, BLOcKeR provides other compelling features, such as anti-reverse engineering. In this case, the attacker is defined as the enemy who captures the drone.

One objective of the attacker is to reverse-engineer the drone in order to copy the design or develop attacks. However, the firmware of the drone is kept obfuscated without inputting the correct biometric. The adversary cannot even operate the drone to learn from it because they do not have the correct biometric—thus avoiding satisfiability attacks on the obfuscated circuit [20].

Conclusion

The BLOcKeR biometric system combines hardware security primitives—hardware obfuscation and PUF—and offers unique benefits, such as configurability and non-linkability. More importantly, unlike traditional biometric systems, BLOcKeR does not require template storage. These advantages make BLOcKeR resistant to various attacks that target template security/privacy and unauthorized system access. The principles outlined above can assist DoD efforts to maintain the security of biometrics-enabled authentication and verification systems. This complicated task will only grow in importance as the use of biometric systems spreads from administrative-focused access applications to use on the battlefield.

Table 2. BLOcKeR noteworthy features

References

1. Uzun, E., Chung, P. H., Essa, I., & Lee, W. (2018). Defending against biometric mimicry: Real-time CAPTCHA-based facial and voice recognition. Journal of the Homeland Defense & Security Information Analysis Center, 5(3), 4–9. Retrieved from https://www.hdiac.org/wp-content/ uploads/Real-time_CAPTCHA_Biomet- rics_V5I3.pdf 2. Cimato, S., Gamassi, M., Piuri, V., Sassi, R., & Scotti, F. (2008). Privacy-aware biometrics: Design and implementation of a multimodal verification system. 2008 Annual Computer Security Applications Conference (ACSAC). doi:10.1109/ac- sac.2008.13

3. Roberts, C. (2007). Biometric attack vectors and defences. Computers & Security, 26(1), 14-25. doi:10.1016/j. cose.2006.12.008

4. Weber, S., Karger, P. A., & Paradkar, A. (2005). A software flaw taxonomy. Proceedings of the 2005 Workshop on Software Engineering for Secure Systems – building Trustworthy Applications – SESS 05. doi:10.1145/1083200.1083209

5. Yang, B., Wu, K., & Karri, R. (2004). Scan based side channel attack on dedicated hardware implementations of data encryption standard. Proceedings of the International Test Conference on International Test Conference (ITC’04), 339–344. Retrieved from https://www.computer.org/csdl/proceedings/itc/2004/2741/00/01386969.pdf

6. Marinissen, E. J., Wachter, B. D., Smith, K., Kiesewetter, J., Taouil, M., & Hamdioui, S. (2014). Direct probing on large-array fine-pitch micro-bumps of a wide-I/O logic-memory interface. 2014 International Test Conference. doi:10.1109/test.2014.7035314

7. Skorobogatov, S. (2010). Flash memory ‘bumping’ attacks. Cryptographic Hardware and Embedded Systems, CHES 2010 Lecture Notes in Computer Science, 158–172. doi:10.1007/978-3-642- 15031-9_11

8. Skorobogatov, S. (2005, April). Semi-invasive attacks – A new approach to hardware security analysis [Technical white paper]. University of Cambridge Computer Laboratory. Retrieved from https://www.cl.cam.ac.uk/techreports/UCAMCL-TR-630.pdf

9. Axiom Test Equipment. (n.d.). Retrieved from https://www.axiomtest.com/

10. Maiorana, E., Hine, G. E., & Campisi, P. (2015). Hill-climbing attacks on multibiometrics recognition systems. IEEE Transactions on Information Forensics and Security, 10(5), 900–915. doi:10.1109/tifs.2014.2384735

11. Delvaux, J., Gu, D., Verbauwhede, I., Hill- er, M., & Yu, M. (2016). Efficient fuzzy ex- traction of PUF-induced secrets: Theory and applications. Lecture Notes in Computer Science Cryptographic Hardware and Embedded Systems – CHES 2016, 412–431. doi:10.1007/978-3-662 53140-2_20

12. Jain, A. K., Nandakumar, K., & Nagar, A. (2008). Biometric template security. EURASIP Journal on Advances in Signal Processing, 2008(1), 579416. doi:10.1155/2008/579416

13. Peterson, A. (2015, September 23). OPM says 5.6 million fingerprints stolen in cyberattack, five times as many as previously thought. Washington Post. Retrieved from https://www.washingtonpost.com/news/the-switch/wp/2015/09/23/opmnow-says-more-than-five-million-finger-prints-compromised-in-breaches/?nore-direct=on&utm_term=.202eb711fca1

14. Wang, H., Forte, D., Tehranipoor, M. M.,& Shi, Q. (2017). Probing attacks on integrated circuits: Challenges and research opportunities. IEEE Design & Test, 34(5), 63-71. doi:10.1109/mdat.2017.2729398

15. Tao, Z., Ming-Yu, F., & Bo, F. (2007). Side-channel attack on biometric cryptosystem based on keystroke dynamics. The First International Symposium on Data, Privacy, and E-Commerce (ISDPE 2007). doi:10.1109/isdpe.2007.48

16. HP. (2014, July 29). HP study reveals 70 percent of internet of things devices vulnerable to attack. (n.d.). Retrieved from https://www8.hp.com/us/en/hp-news/press-release.html?id=1744676&mtx-s=rss-corp-news#.Wtjkh4jwZaR

17. Maes, R., & Verbauwhede, I. (2010). Physically unclonable functions: A study on the state of the art and future research directions. In A.-R. Sadeghi & D. Naccache (Eds.), Information Security and Cryptography: Towards Hardware-Intrinsic Security (pp. 3–37). Berlin, Springer.

18. Forte, D., Bhunia, S., & Tehranipoor, M. M. (2017). Hardware Protection through Obfuscation. Berlin: Springer. doi:10.1007/978-3-319-49019-9

19. Ganji, F. (2018). On the Learnability of Physically Unclonable Functions. Berlin: Springer. doi: 10.1007/978-3-319-76717-8

20. Subramanyan, P., Ray, S., & Malik, S. (2015). Evaluating the security of logic encryption algorithms. 2015 IEEE International Symposium on Hardware Oriented Security and Trust (HOST). doi:10.1109/hst.2015.7140252